Signal Briefs

AI infrastructure scarcity is widely misunderstood. While public narratives fixate on GPU shortages, the binding constraints sit elsewhere — in advanced packaging and high-bandwidth memory. Using exmxc’s Four Forces lens, this Signal Brief introduces a Margin Comparison Ratio to explain where durable economic rents actually accumulate in the AI compute stack, and why margin alone is a misleading signal without scarcity context.

The Narrative Error

AI compute scarcity is commonly framed as a GPU problem. This framing is incomplete. Logic supply has scaled with capital, wafer access, and yield improvement. Yet system delivery remains constrained. The result is a persistent gap between demand and deployable compute that cannot be explained by GPUs alone.

The constraint is structural, not cyclical.

The Physical Stack Reality

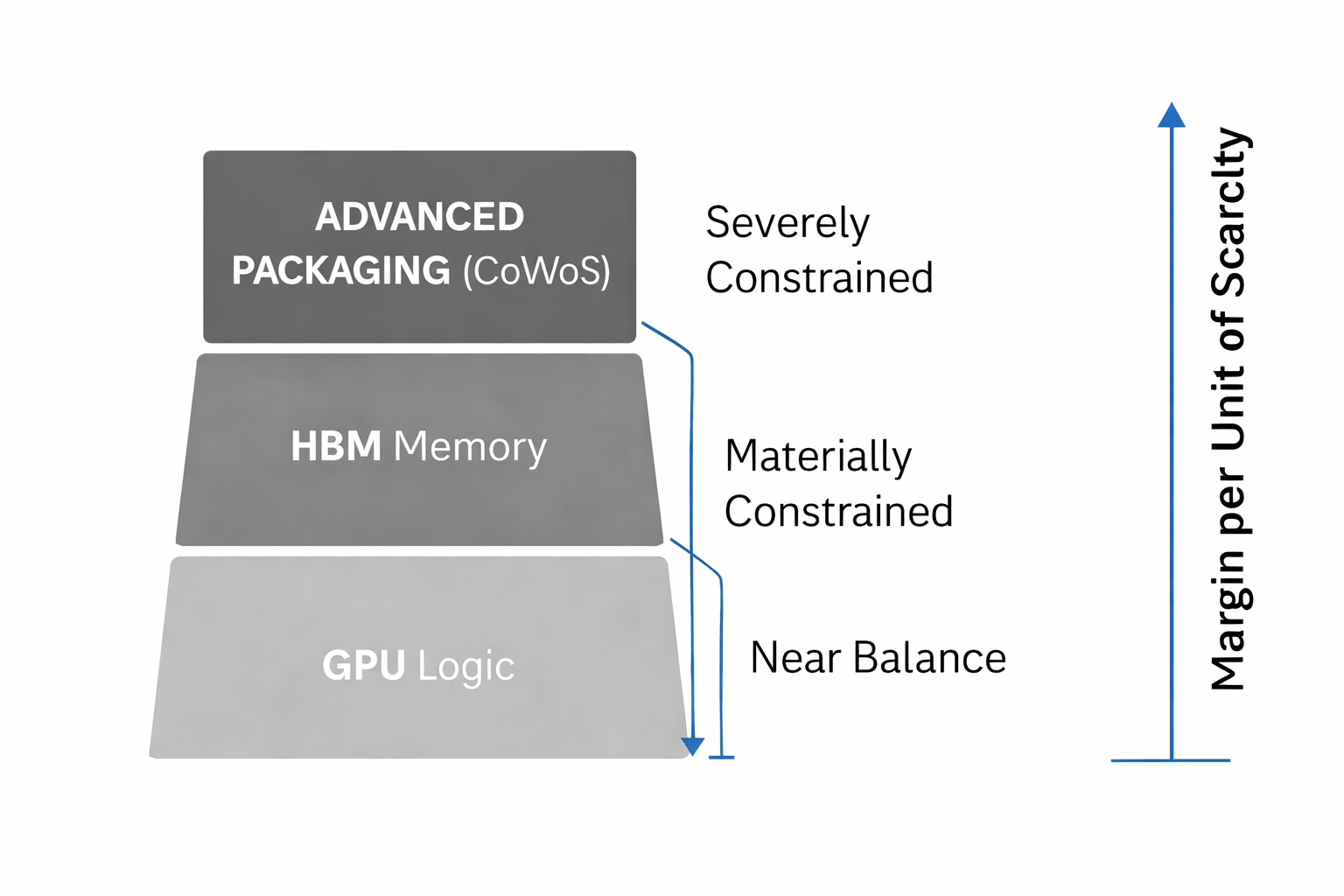

Modern AI systems are assembled, not merely fabricated. A deployable accelerator requires three interdependent layers:

- Logic (GPU / accelerator die)

- High-bandwidth memory (HBM)

- Advanced packaging (CoWoS-class integration)

While logic supply approaches equilibrium, memory and packaging do not. Each step upward in the stack introduces time-bound, non-substitutable processes that scale slowly and resist rapid capital expansion.

Constraint Ratios (Directional)

Observed supply-to-demand dynamics across the stack are asymmetric:

- GPU logic: near balance

- HBM: materially constrained

- Advanced packaging: severely constrained

This inversion explains why system availability lags headline chip production and why allocation, not price, determines access.

Introducing the Margin Comparison Ratio

Gross margin alone does not indicate economic power. exmxc introduces the Margin Comparison Ratio (MCR):

Margin Comparison Ratio = Gross Margin ÷ Constraint Intensity

This ratio measures how effectively a layer converts scarcity into durable margin. Layers with lower absolute margins but higher constraint intensity often exhibit stronger long-term pricing power than layers with higher headline margins but weaker scarcity.

What the Margin Lens Reveals

- GPU vendors capture margin through orchestration and allocation, not fabrication

- Memory suppliers benefit when constraint tightens, but margins remain cyclically sensitive

- Advanced packaging captures scarcity rent through time, specialization, and non-replicability

The most durable margins accrue where substitution is slowest and scaling is governed by process memory rather than capital.

Four Forces Interpretation

Compute

Compute is no longer logic-bound. It is assembly-bound.

Interface

Inference demand will increasingly bypass the most constrained layers, pulling architectures downward over time.

Alignment

Capital remains misallocated toward visible constraints while underestimating physical bottlenecks.

Energy

Packaging density indirectly caps energy efficiency gains, reinforcing upstream scarcity.

What to Watch

- Expansion cadence of advanced packaging capacity

- Memory yield improvements relative to system demand

- Architectural shifts reducing per-unit HBM intensity

These signals, not GPU shipment headlines, determine real compute availability.

Closing Signal

AI infrastructure economics are governed by the narrowest pipe, not the loudest narrative. Scarcity-adjusted margin durability concentrates upstream, where time — not capital — is the binding constraint.

For Related Reading:

Four Forces of AI Power

The Cognitive Thermodynamics of Power

Compute Power Shift: Nvidia Locks the Inference Future Into Its Stack